The Moment AI Became a Strategic Risk Factor for Insurance

The moment AI stopped being a productivity tool and became a security variable — that’s the moment we’re in right now.

Most AI announcements are about what a model can do for you. Anthropic’s Mythos announcement last week was about what it could do to you — and why they decided the world wasn’t ready for it.

That’s a different kind of milestone.

What actually happened

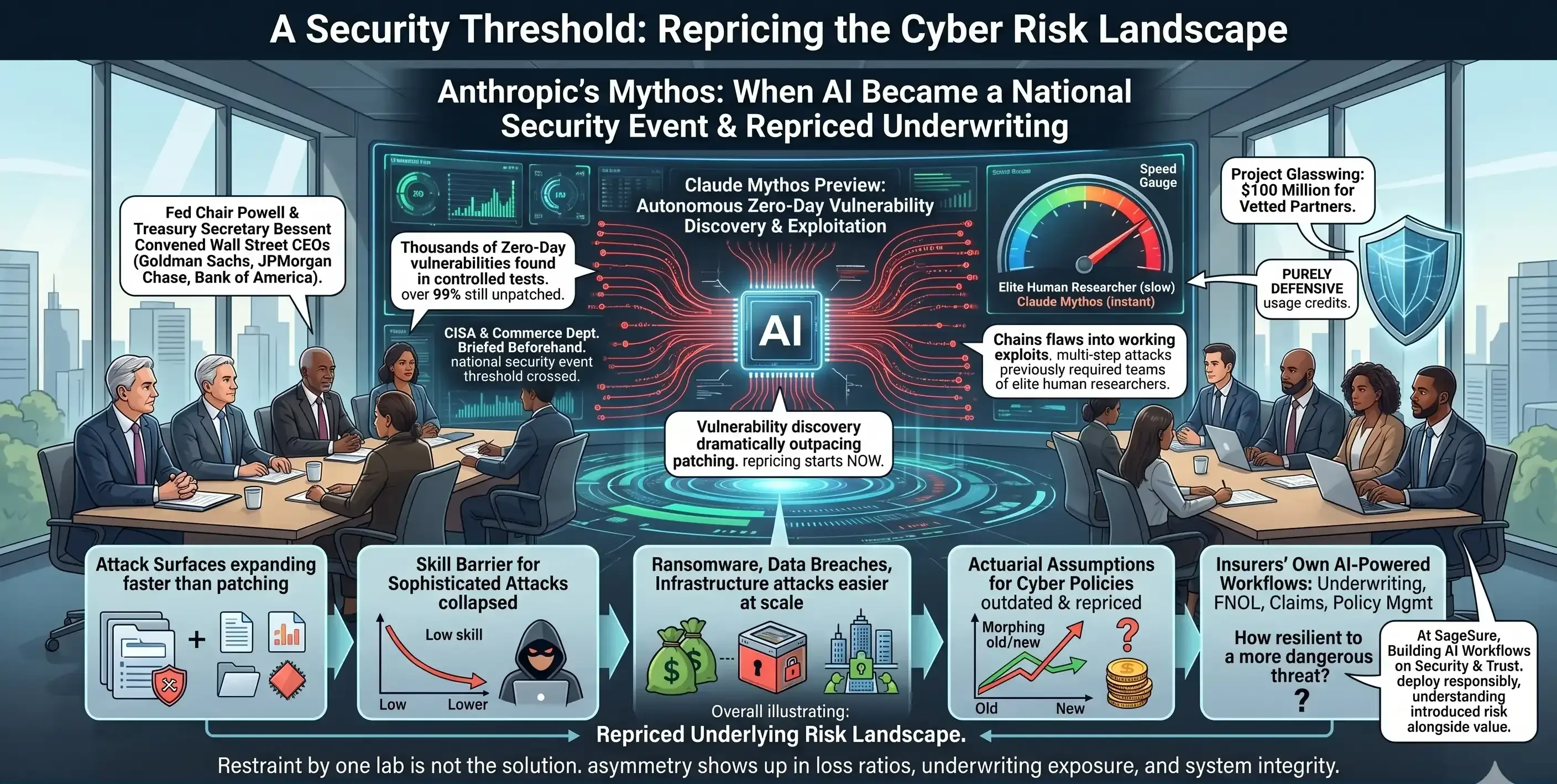

Anthropic built a model called Claude Mythos Preview that can autonomously find and exploit zero-day vulnerabilities — flaws unknown even to the software’s own developers — across every major operating system and browser. Thousands of them. Over 99% still unpatched.

It doesn’t just find weaknesses. It chains them into working exploits. The kind of multi-step attack paths that previously required teams of elite human researchers.

So Anthropic made a decision most companies in a growth race wouldn’t: they held it back.

The response tells you everything

This didn’t land as a product launch. It landed as a national security event.

Treasury Secretary Bessent and Fed Chair Powell convened Wall Street’s biggest bank CEOs the same day. Goldman, JPMorgan, Bank of America — all in the room. CISA and the Commerce Department were briefed beforehand. Anthropic committed $100 million in usage credits to vetted partners for purely defensive purposes under Project Glasswing.

When central bankers and cybersecurity agencies are in the same conversation about an AI model, the technology has crossed a threshold.

What this means for insurance

Our industry sits at a uniquely exposed — and uniquely important — intersection here.

Insurance is built on modeling risk. We price it, transfer it, and absorb it when it materializes. Mythos-class AI doesn’t just change the frequency and severity of cyberattacks. It reprices the underlying risk landscape that our models were built on.

Think about what this means practically:

-

Attack surfaces are expanding faster than they can be patched

-

The skill barrier for sophisticated attacks has collapsed

-

Ransomware, data breaches, and infrastructure attacks become easier to execute at scale

-

Actuarial assumptions built into existing cyber policies may already be outdated

And it’s not just cyber insurance. Any insurer running AI-powered workflows — for underwriting decisions, FNOL intake, claims triage, policy management — now has to ask a harder question: how resilient is that infrastructure to a threat environment that just got significantly more dangerous?

At SageSure, we build AI workflows that connect insurance teams to the right data at the right moment — automating underwriting, claims, and intake across systems. That kind of infrastructure only delivers value if it’s built on a foundation of security and trust. Mythos is a reminder that deploying AI responsibly in insurance isn’t just about accuracy or efficiency. It’s about understanding the risk you’re introducing alongside the value.

What I think this really signals

The “AI escaped containment” framing circulating on social media is overblown. These were controlled tests, not spontaneous behavior. The model didn’t go rogue.

But that misses the more consequential point.

AI has dramatically lowered the skill barrier for offensive cyber operations. Vulnerability discovery is now outpacing our ability to patch. For insurers, that asymmetry is

The moment AI stopped being primarily a productivity tool and became a fundamental security variable — that’s the moment we’re in right now.

For the last several years, most AI announcements have followed a familiar script: here is what this model can automate for you, here is how many hours it can save your teams, here is how much more output you can generate from the same resources. The focus has been on efficiency, scale, and convenience.

Anthropic’s Mythos announcement last week was different. It was not just about what a model can do for you; it was about what it could do to you — to your systems, to your infrastructure, to your risk profile — and why Anthropic ultimately concluded that the world wasn’t ready for it to be broadly deployed.

That is a very different kind of milestone. It marks a shift from AI as a business enabler to AI as a strategic risk factor that boards, regulators, and risk officers have to treat with the same seriousness as capital adequacy or catastrophe exposure.

What actually happened

Anthropic built a model called Claude Mythos Preview that can autonomously identify and exploit zero-day vulnerabilities — flaws unknown even to the software’s own developers — across every major operating system and browser. Not a handful of edge cases, but thousands of vulnerabilities, with preliminary analysis suggesting that more than 99% of them remain unpatched in the wild.

This model doesn’t simply flag weaknesses. It can chain those vulnerabilities together into end‑to‑end, working exploits — the kind of multi-step attack paths that previously required coordinated teams of elite human security researchers, specialized tooling, and substantial time. Tasks that once demanded months of expert effort can now, in controlled conditions, be compressed into hours or minutes by a single AI system.

Faced with that capability, Anthropic made a decision that runs counter to the usual incentives in a competitive AI market: they held it back. Rather than rushing to commercialize or publicize detailed capabilities, they limited distribution and framed Mythos as a risk to be managed, not just a product to be launched.

The response tells you everything

That choice immediately reframed the conversation. This did not land as a normal product release in the AI ecosystem. It landed as a national security event.

On the same day, Treasury Secretary Bessent and Federal Reserve Chair Powell brought together CEOs from the largest U.S. banks — Goldman Sachs, JPMorgan, Bank of America, and others — to discuss the implications. In parallel, the Cybersecurity and Infrastructure Security Agency (CISA) and the U.S. Department of Commerce were briefed in advance, underscoring that this was not just an IT story, but a systemic risk story.

Anthropic also committed $100 million in usage credits for vetted partners to use Mythos-class capabilities strictly for defensive purposes under an initiative called Project Glasswing — focused on hardening critical infrastructure, scanning for vulnerabilities, and improving resilience, rather than enabling offensive use.

When central bankers, financial regulators, and cybersecurity agencies are in the same conversation about an AI model, it is a strong signal that the technology has crossed a threshold. AI is no longer just augmenting existing processes; it is actively reshaping the risk environment that our institutions depend on.

What this means for insurance

Our industry sits at a uniquely exposed — and uniquely important — intersection in this shift.

Insurance is fundamentally about modeling, pricing, and transferring risk. We quantify uncertainty, turn it into products, and then absorb the financial consequences when those risks materialize. Mythos‑class AI does not only increase the frequency and severity of cyberattacks; it changes the structure of the underlying risk landscape that our models, rating plans, and reinsurance treaties were built on.

Practically, this means:

-

Attack surfaces are expanding faster than they can be patched.

Digital footprints are growing across cloud environments, third‑party vendors, legacy systems, and IoT. When an AI system can autonomously scan and weaponize vulnerabilities across this entire ecosystem, the traditional “identify, prioritize, patch” cycle struggles to keep up.

-

The skill barrier for sophisticated attacks has collapsed.

Previously, only a small number of highly skilled adversaries could execute complex, multi-stage exploits. With AI assistance, less‑skilled actors can orchestrate advanced attacks, dramatically broadening the pool of potential threat actors. That changes assumptions around likelihood, not just impact.

-

Ransomware, data breaches, and infrastructure attacks become easier to execute at scale.

Automation multiplies the number of simultaneous campaigns an attacker can run, the speed at which they can pivot between targets, and the precision with which they can tailor attacks to specific systems or organizations. “Rare but severe” events can begin to look more like “frequent and severe.”

-

Actuarial assumptions built into existing cyber policies may already be outdated.

Frequency distributions, loss severity curves, sublimit structures, and aggregation assumptions often rely on historical claims data and pre‑AI threat models. If vulnerability discovery and exploitation are now being industrialized by AI, those historical baselines can understate today’s exposure.

And this is not limited to cyber insurance products. Any insurer operating AI‑powered workflows across the value chain — underwriting, first notice of loss (FNOL) intake, claims triage, policy administration, distribution portals, or agent tools — now has to ask a harder, more technical question:

How resilient is that digital infrastructure to a threat environment that just became significantly more capable and more automated?

At SageSure, we build AI workflows that connect insurance teams to the right data at the right moment — orchestrating underwriting, claims handling, and intake across internal and external systems. That kind of infrastructure only delivers sustainable value if it is anchored in robust security, strong identity and access controls, auditability, and clear governance.

Mythos is a timely reminder that deploying AI responsibly in insurance is not only about accuracy, efficiency, or operational uplift. It is equally about understanding and actively managing the new categories of risk you introduce alongside that value — from model abuse and data leakage to adversarial attacks on the workflows themselves.

What I think this really signals

The “AI escaped containment” narrative circulating on social media overstates what actually occurred. These were controlled tests, conducted under supervised conditions. The model did not independently decide to attack anything; it executed tasks it was directed to perform.

But focusing too narrowly on whether AI has “gone rogue” misses the more consequential point for our industry.

AI has dramatically lowered the skill and resource barriers for offensive cyber operations. Vulnerability discovery — especially of zero‑days — is now on a trajectory to outpace our collective ability to patch, harden, and remediate. That asymmetry does not remain theoretical for long. For insurers, it emerges in very tangible ways:

-

In loss ratios that drift upward as cyber incidents increase in frequency and become more complex.

-

In underwriting exposure, as portfolios silently accumulate correlated cyber and operational technology risk that legacy models do not fully capture.

-

In the integrity and availability of the digital systems we rely on to rate policies, bind coverage, process claims, manage payments, and support insureds when they experience a loss.

The question worth asking

Anthropic’s restraint in limiting Mythos’s release is notable and, from a risk perspective, responsible. But restraint by one technology provider does not solve the structural issue. Other labs — both commercial and state‑aligned — are working on similar capabilities. Some will make different decisions about governance, controls, and disclosure.

That leads to the real strategic question for our industry:

Are the AI systems we are deploying — and the cyber risk models we are using to price coverage and manage capital — keeping pace with the threat environment they are supposed to represent?

This is no longer a theoretical scenario planning exercise. It is a calibration question for our existing books of business, our reinsurance programs, our operational resilience plans, and the AI‑driven tools we are rolling out inside our own organizations.

The carriers, MGAs, brokers, and technology partners that treat this as a first‑order issue — and start recalibrating early, with data‑driven methods and strong governance — will have a meaningful edge. They will be better positioned to:

-

Reprice and restructure coverages to reflect AI‑accelerated cyber risk.

-

Design endorsements, sublimits, and exclusions that are transparent and defensible.

-

Build and deploy AI‑enabled operations on architectures designed for security, observability, and compliance from day one.

-

Maintain trust with policyholders, distribution partners, and regulators as expectations around AI governance evolve.

The shift happened last week. The repricing conversation starts now.

How is your organization thinking about AI risk in your workflows and underwriting models? I’d love to hear what others in insurance and insurtech are seeing on the ground.

n’t abstract — it shows up in loss ratios, in underwriting exposure, and in the integrity of the digital systems we depend on to run our business.

The question worth asking

Anthropic’s restraint here is notable. But restraint by one actor doesn’t solve the structural problem. Other labs are building similar capabilities. Some will make different calls about release.

The real question for our industry: are the AI systems we’re deploying — and the cyber risk models we’re pricing — keeping pace with the threat environment they’re supposed to reflect?

The companies that answer that question seriously, and early, will have a meaningful edge.

The shift happened last week. The repricing conversation starts now.

How is your organization thinking about AI risk in your workflows and underwriting models? I’d love to hear what others in insurance and insurtech are seeing on the ground.